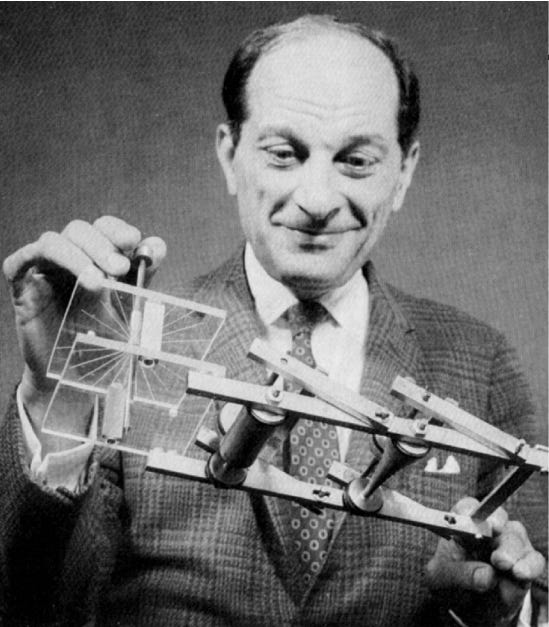

Today in 1909, mathematician Stanislaw Ulam was born.

In his autobiography, Adventures of a Mathematician (1976), Ulam wrote:

The idea for what was later called the Monte Carlo method occurred to me [in 1946] when I was playing solitaire during my illness. I noticed that it may be much more practical to get an idea of the probability of the successful outcome of a solitaire game (like Canfield or some other where the skill of the player is not important) by laying down the cards, or experimenting with the process and merely noticing what proportion comes out successfully, rather than try to compute all the combinatorial possibilities which are an exponentially increasing number so great that, except in very elementary cases, there is no way to estimate it. This is intellectually surprising, and if not exactly humiliating, it gives one a feeling of modesty about the limits of rational or traditional thinking. In a sufficiently complicated problem, actual sampling is better than an examination of all the chain of possibilities…. It so happened that computing machines were coming into existence, and here was something suitable for machine calculation.

Physicist Nicholas Metropolis in “The Beginning of the Monte Carlo Method” (1987):

Stan's extensive mathematical background made him aware that statistical sampling techniques had fallen into desuetude because of the length and tediousness of the calculations. But with this miraculous development of the ENIAC—along with the applications Stan must have been pondering—it occurred to him that statistical techniques should be resuscitated, and he discussed this idea with von Neumann. Thus was triggered the spark that led to the Monte Carlo method…

I suggested an obvious name for the statistical method—a suggestion not unrelated to the fact that Stan had an uncle who would borrow money from relatives because he "just had to go to Monte Carlo." The name seems to have endured.

It is interesting to look back over two score years and note the emergence, rather early on, of experimental mathematics, a natural consequence of the electronic computer. The role of the Monte Carlo method in reinforcing such mathematics seems self-evident… It is, in fact, the coupling of the subtleties of the human brain with rapid and reliable calculations, both arithmetical and logical, by the modem computer that has stimulated the development of experimental mathematics. This development will enable us to achieve Olympian heights.

To this day, the Monte Carlo Method or Monte Carlo Simulation is used in a wide variety of fields, including physics, biology, finance and risk assessment, manufacturing, insurance project management, research and development, oil and gas and engineering.

One successful application of the Monte Carlo Method has been as a heuristic search algorithm, Monte Carlo tree search, mostly used in software that plays board games. In March 2016, AlphaGo, an AI program from Google’s Deep Mind which combines Deep Learning with Monte Carlo tree search, defeated Go champion Lee Sedol in a five-game match with a final score of four games to one. Modern AI has arrived, certainly in terms of worldwide mass attention.

David Silver and Demis Hassabis, Google DeepMind:

Go is a game of profound complexity. The search space in Go is vast -- more than a googol times larger than chess (a number greater than there are atoms in the universe!). As a result, traditional “brute force” AI methods -- which construct a search tree over all possible sequences of moves -- don’t have a chance in Go. To date, computers have played Go only as well as amateurs. Experts predicted it would be at least another 10 years until a computer could beat one of the world’s elite group of Go professionals.

We saw this as an irresistible challenge! We started building a system, AlphaGo, described in a paper in Nature this week, that would overcome these barriers. The key to AlphaGo is reducing the enormous search space to something more manageable. To do this, it combines a state-of-the-art tree search with two deep neural networks, each of which contains many layers with millions of neuron-like connections. One neural network, the “policy network,” predicts the next move, and is used to narrow the search to consider only the moves most likely to lead to a win. The other neural network, the “value network,” is then used to reduce the depth of the search tree -- estimating the winner in each position in place of searching all the way to the end of the game.

AlphaGo’s search algorithm is much more human-like than previous approaches. For example, when Deep Blue played chess, it searched by brute force over thousands of times more positions than AlphaGo. Instead, AlphaGo looks ahead by playing out the remainder of the game in its imagination, many times over -- a technique known as Monte-Carlo tree search. But unlike previous Monte-Carlo programs, AlphaGo uses deep neural networks to guide its search. During each simulated game, the policy network suggests intelligent moves to play, while the value network astutely evaluates the position that is reached. Finally, AlphaGo chooses the move that is most successful in simulation.